Claude at Scale: Cost and Performance Without Quality Loss

30 Mar 2026

13 Min

112 Views

Enterprise AI spend is growing faster than almost any technology budget line. Most companies run a Claude pilot, model costs based on a few hundred test requests, deploy at scale, ignore production-level usage patterns, and then find that the monthly invoice is three to five times the projection. The gap is real and predictable. Token math is not complicated; the problem is that production load patterns look nothing like pilot conditions.

As an IT partner with 15+ years of experience, Cleveroad helps enterprises manage Claude-based systems at scale. We have worked with teams running millions of API requests each month. In this article, we explain where AI costs come from, how to reduce them with the right architecture, and how to track savings across teams and products.

Key takeaways:

- An enterprise processing 1 million log-analysis requests per month on Claude Sonnet 4.6 faces a $330,000/month baseline cost before any optimization.

- Claude API costs can be significantly reduced by adopting a layered architecture that combines data preparation, model routing, prompt caching, and batch processing.

- The layer most engineering teams skip is cost governance: budget attribution by team, rate enforcement, and observability across the full deployment.

Read on to understand each layer, the tradeoffs that come with it, and what the full cost picture looks like when you move beyond API tokens.

Why Enterprise AI Costs Scale Faster Than Expected in Production

Most enterprises that hit a surprise Claude bill follow the same pattern: token volumes surge in production, and model choices made for quality fail under real load. At the same time, agent loops silently multiply the cost through retries. Left unaddressed, these factors turn predictable pilot budgets into uncontrolled production spend.

Controlling them requires understanding how cost behaves at scale.

How token volume breaks cost forecasts in production

A workflow that handles 10 support tickets in testing often scales to 10,000 in production. If the prompt includes full conversation history, previous ticket context, retrieved data from external systems (such as CRM records, logs, or user profiles), and product documentation from a knowledge base, input tokens per request can reach 50,000 to 100,000 or more.

This is consistent with real-world LLM deployment patterns, where long-context prompts quickly accumulate tokens due to multi-source retrieval and conversation chaining. For example, OpenAI notes that modern models already support context windows of up to 128K+ tokens, which are actively used in enterprise scenarios involving large documents and conversation histories.

Most cost forecasts, however, are based on a simplified prompt from the pilot phase and fail to reflect this growth in production-level context.

How agent loops quietly multiply API costs through retries

Agentic workflows introduce a cost pattern that flat-rate estimates often fail to capture. When an agent does not complete a task successfully and triggers a retry, whether due to an invalid tool call, a validation failure, or an incomplete downstream response, the system incurs the full token cost again.

In production environments, these retries are not edge cases. As task complexity increases, retry cycles accumulate, creating a noticeable gap between projected and actual usage and effectively increasing the total number of billable requests without adding business value.

The architectural fix focuses on three areas: validating outputs before triggering retries, enforcing structured responses through tool-based interactions, and routing simple recovery tasks to lower-cost model tiers rather than rerunning the full agent at premium model pricing.

The Model Tier Decision: When to Use Haiku, Sonnet, and Opus

Model selection is the highest-leverage cost decision in any Claude deployment. The right choice is not always the cheapest model; it is the cheapest model that holds quality for a specific task type.

The table below maps common enterprise workloads to the appropriate model tier, covering task type, recommended model, as well as the reasoning behind each routing decision.

| Task type | Recommended model | Reasoning |

Classification, extraction, summarization, and tagging of structured data | Haiku 4.5 ($1/$5 per MTok) | Pattern-matching tasks; speed and cost matter more than reasoning depth |

General coding, document analysis, conversational AI, and workflow automation | Sonnet 4.6 ($3/$15 per MTok) | Balanced; handles most production workflows at a sustainable cost |

Complex reasoning, multi-step agent tasks, security analysis, and decision support systems | Opus 4.6 ($5/$25 per MTok) | Reserved for tasks where reasoning quality directly affects outcome accuracy |

High-volume offline processing (reports, batch summaries) | Haiku 4.5 + Batch API | 50% additional discount on already low-cost model |

Tasks requiring Sonnet-level accuracy at Haiku cost | Model distillation (preview) | Transfers Sonnet intelligence to Haiku price point; up to 70%+ cost reduction where applicable |

The table defines the baseline, but real cost control depends on how these choices hold under production constraints. We’ll define the minimum quality threshold for each workload and identify where techniques like model distillation deliver meaningful cost savings at scale.

Quality thresholds without cost increase

The practical test for model routing is this: what is the minimum quality level that makes the output usable? For log summarization, a brief, accurate summary meets the bar. For a security anomaly analysis that drives an incident response, a missed signal is a business risk.

The routing decision must start with task criticality. Organizations that route by cost first and discover quality failures after launch spend more on remediation. Set the quality floor for each workload type before writing the routing logic.

Model distillation for cost savings at scale

For organizations running high-volume, well-defined workloads, Anthropic's model distillation transfers a larger model's learned behavior to a smaller one for specific tasks, delivering Sonnet-level accuracy at Haiku pricing. Available in preview on Amazon Bedrock as of mid-2025, it is the most significant cost lever for enterprises with stable, repeated workloads.

The tradeoff is narrow task fit. Distillation only pays off when a task type runs at sufficient volume and consistency to justify the training investment. Outside its training distribution, performance degrades. This is a specialized tool for mature, high-scale deployments.

Not sure which model tier fits your workload? Try Cleveroad's AI Strategy Advisor to map your workflows before committing to architecture decisions

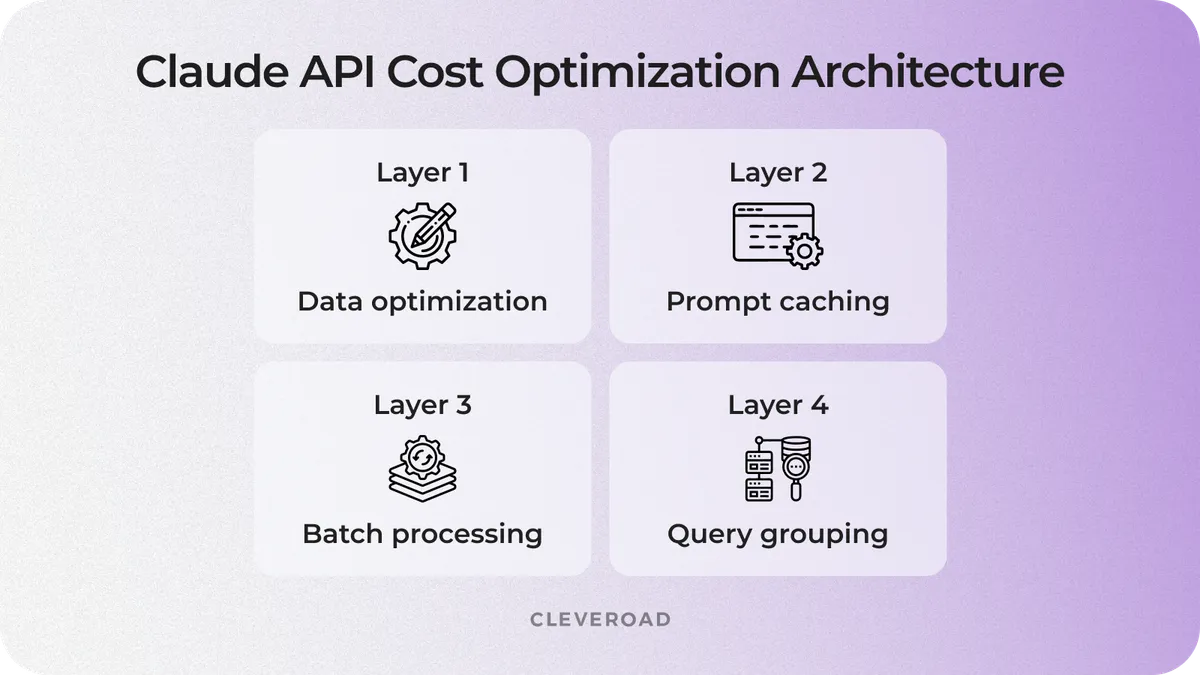

Major Architecture Decisions: Reducing Claude API Costs

These four optimization layers work cumulatively, with each one reducing the cost baseline for the next. The sequence is critical: data preparation reduces overall volume, model routing determines the pricing tier, while caching and batching optimize the already reduced workload. When applied in order to a $330,000/month deployment, this strategy can bring monthly costs down to under $115,000.

The diagram below illustrates the cumulative cost reduction at each layer, anchored to a benchmark annual production workload.

4 architecture decision reducing Claude API costs

Layer 1: Data and payload optimization

Cost reduction starts before the API call. Stripping redundant fields, compressing overly detailed formats, and filtering low-signal data before it reaches the API can reduce input token volume by 40%+ on structured workloads such as logs, tickets, or document batches. This preprocessing runs on your infrastructure before the API call and operates outside of billing, compounding every subsequent optimization.

For a $330,000/month baseline, a 43% input reduction translates into roughly $1.7 million in annual savings before any other lever is applied. One important consideration is that over-compression can remove the critical signal along with noise. A quality check that compares compressed and original outputs is required before deploying at scale.

Layer 2: Prompt caching

Prompt caching reduces input costs when the same context is reused across requests. For workloads with large shared inputs, it can lower input costs by up to 90%, turning repeated context from a major expense into a marginal one.

It works by storing processed inputs, such as system prompts or knowledge base content and reusing them across requests at a discounted rate. Effective caching depends on separating stable shared context from request-specific data.

A one-time write cost (25% premium) is required to populate the cache, but it is amortized across repeated reads. Cache duration also matters: short-lived caches suit high-frequency workloads, while longer-lived caches support lower-frequency use cases.

Layer 3: Batch API

Batch processing reduces API costs for workloads that do not require real-time responses. By shifting eligible requests to asynchronous execution, it applies a 50% discount on both input and output tokens, making it one of the most direct cost levers for high-volume processing. It works by routing selected workloads through the Batch API, where requests are processed asynchronously and returned within a defined time window.

The trade-off is latency: responses are delivered within 24 hours. This makes batch processing suitable for use cases such as daily summaries, weekly reports, trend analysis, and document-processing queues.

For a $1.9 million annual baseline after routing optimization, moving 40% of requests to batch can reduce costs by approximately $380,000 per year. The key decision is operational: identify which workflows can tolerate delayed responses and prioritize those for batch execution.

Layer 4: Query grouping and structured outputs

Query grouping reduces input costs by eliminating repeated context across multiple analyses. When several operations run on the same data, grouping them into a single request allows all results to be generated in a single input pass instead of multiple.

This method uses structured outputs to execute multiple tasks in one call, avoiding redundant token usage. For example, a security check, a compliance review, risk scoring, and a document summary sent separately require four input passes, whereas a grouped request requires only one.

On top of an already optimized architecture, query grouping typically delivers an additional 4–5% cost reduction. While smaller than earlier layers, it compounds at scale and becomes meaningful across high-volume workloads.

At Cleveroad, this optimization is defined at the design stage alongside token modeling and model routing, as well as cache architecture. It ensures cost efficiency is built into the system before production deployment.

What You Give Up When You Optimize: The Cost-Quality Tradeoff

Every cost optimization changes output quality, so the key decision is how much quality loss is acceptable for a specific workload. That depends on task criticality and risk tolerance, as well as how well quality is measured in production.

The table below maps each optimization to the gains and risks you face.

| Optimization | What you gain | What you risk |

Routing to Haiku | 70%+ cost reduction | Accuracy loss on reasoning-heavy tasks |

Data compression | 40%+ input reduction | Signal loss if compression is too aggressive |

Prompt caching | 90% cost on cached content | Stale cache serving outdated context |

Batch API | 50% discount | 24-hour latency on potentially time-sensitive outputs |

Query grouping | Reduced API calls | More complex output parsing; failure in one query affects the group |

Model distillation | Sonnet accuracy at Haiku cost | Narrow task fit; degrades outside training distribution |

How to prevent hidden quality loss

Cost optimization without measurement creates unmanaged risk. Every routing change or compression step needs a before-and-after benchmark on a representative dataset. Quality criteria must reflect the task: coverage and factual accuracy for summarization, precision and recall for classification, as well as false negative rate for security analysis.

Tooling such as Anthropic’s evaluation framework, LangSmith, and Braintrust supports this process. The key requirement is defining quality metrics before introducing cost changes. If regressions appear weeks later, attribution becomes complex, and remediation costs increase.

Where cutting costs creates compliance exposure

In regulated environments, output quality directly affects legal and audit exposure. A compliance summary in healthcare or a financial risk assessment carries liability. Routing such tasks to lower-cost models or compressing regulatory context introduces material compliance and audit risks that go beyond standard quality degradation.

For Healthcare (HIPAA) and financial services (SAMA, FMIA), cost-quality analysis must include a compliance dimension. When planning budgets, teams should align these risks with a realistic AI development cost estimate to account for model selection, infrastructure, and governance overhead.

Regional deployment options, such as Anthropic’s endpoints with data residency, add approximately 10% to the cost. This is a fixed requirement for regulated organizations and must be incorporated into financial planning from the outset.

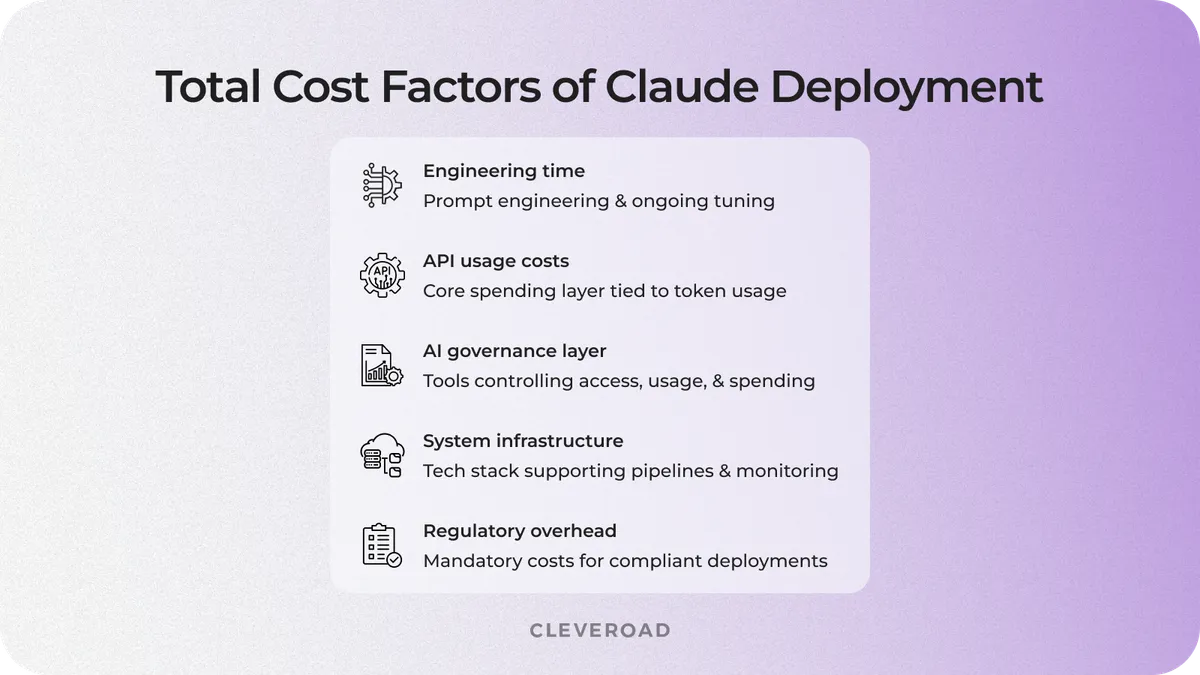

Total Cost of Enterprise Claude Deployment: Beyond API Tokens

API tokens are the most visible and easiest to optimize, but they rarely reflect the full spend. A Total Cost of Ownership (TCO) assessment for enterprise Claude deployments comprises five distinct categories, and most budget models overlook at least two.

The five cost categories most enterprise AI budgets miss

Here is what the full cost picture looks like for a production Claude deployment:

- API tokens: API token costs include input and output tokens, cache writes, and batch processing discounts. This cost layer is the primary target for optimization efforts and can be reduced by more than 65% with the right architecture. For example, a baseline spend of $330,000 per month can be optimized down to approximately $114,000 per month.

- Infrastructure: Infrastructure costs cover vector databases for RAG, orchestration layers, monitoring systems, and CI/CD pipelines for AI workflows. While these expenses are typically lower than API costs at most stages, they increase steadily as system complexity and scale grow.

- Team time: Team-related costs include prompt engineering, evaluation pipeline maintenance, continuous model tuning, and adaptation to new Claude model releases. These efforts are often underestimated or excluded from initial budgets, yet they consistently emerge later as additional engineering workload and unplanned costs.

- Governance tooling: Governance tooling includes AI gateway layers, observability platforms, budget control systems, and access management tools that regulate how different systems interact with the API. These solutions are either developed in-house or implemented via third-party vendors. Moreover, governance tooling requires ongoing investment, and costs increase as more business units and products connect to the API.

- Compliance overhead in regulated industries: Compliance-related costs include data residency requirements, audit logging, and security reviews for API integrations. Anthropic’s regional endpoint option introduces an additional 10% pricing multiplier for deployments that require strict geographic data controls. In industries such as Healthcare and FinTech, these investments are mandatory and must be accounted for from the outset.

Cost factors of Claude deployment

When to build vs. when to work with a partner

Optimizing Claude costs is achievable in-house, but it comes at a hidden cost. Even after reducing API spend by 65%, software development experts still need to invest in prompt engineering, evaluation pipelines, governance systems, and ongoing maintenance as models evolve.

The real decision is whether your engineering team should spend time building AI infrastructure or focusing on the product it is meant to support. Because for most enterprise IT specialists, AI infrastructure is not a core competency.

This is where the economics shift. A partner with a proven architecture and established methodology reduces both time-to-value and internal engineering overhead. Rebuilding optimization and governance internally often costs more than expected, with the impact showing up as delayed product delivery rather than visible infrastructure spend.

To ensure cost-efficient Claude deployment from day one, use Cleveroad’s AI-assisted development services that include cost architecture and governance as part of the delivery scope

Why Cleveroad for Enterprise Claude Deployment

Cleveroad is a custom software development company headquartered in Estonia, Central and Northern Europe. Since 2011, our experts have been building enterprise solutions across Healthcare, FinTech, Logistics, Education, and Media. In 2025, Cleveroad ranked 11th on the Clutch 1000 and earned Clutch Global Leader recognition for Fall 2025.

What Cleveroad brings to enterprise Claude deployments:

- Cost architecture designed before the first API call: Cleveroad specialists define token modeling, model routing logic, cache architecture, and governance scope at the design stage to control costs from day one.

- Domain expertise in regulated industries: We build solutions for Healthcare (HIPAA), FinTech (SAMA, FMIA), and Logistics, where compliance and data residency directly shape architecture and total cost.

- Full-cycle software development and delivery: Our experts handle architecture design, deployment, evaluation, and continuous maintenance to keep your solution aligned with evolving Claude models.

- Engagement flexibility: Cleveroad offers Dedicated Team, Staff Augmentation services, and Project-Based models to align with your team structure, timeline, and delivery goals.

Our AI development expertise

Over the years, our team has built AI-driven platforms that support large-scale operations and real-time decision-making across data-intensive environments. Here is one example of how that engineering discipline applies to production-ready AI systems in Cleveroad practice.

Our client was a K–12 STEM education provider that struggled with fragmented operations, manual scheduling, and limited visibility into performance metrics. The organization needed a centralized AI-powered platform to automate workflows and optimize class scheduling, as well as provide actionable insights for students and instructors.

The Cleveroad team designed and developed an AI-powered operations platform that unified scheduling, performance tracking, and analytics into a single system. The solution enabled automated planning, improved resource allocation, and real-time visibility into operational data.

As a result, the client reduced manual workload, improved decision-making speed, and scaled program delivery without increasing operational overhead.

The same principle applies to Claude deployments: early architecture decisions around workflow automation, data pipelines, and model integration define scalability, cost efficiency, and long-term system performance. Explore the AI Operations Platform for K–12 STEM Provider case in detail.

Ready to structure your Claude deployment for cost governance from the start? Our team is available for an initial architecture consultation before any code is written.

Build a cost-efficient Claude-based system with us

With 15+ years of experience in enterprise software delivery, our team designs Claude-based solutions that stay within budget and meet compliance requirements, scaling reliably from day one

It depends on workload type, request volume, and model selection. An enterprise processing one million requests monthly on Sonnet 4.6 with 100,000 input tokens per request faces roughly $330,000/month before optimization. With layered architecture covering data preparation, model routing, caching, and batch processing, the baseline drops to approximately $114,000/month, a 65% reduction. Real costs vary by workload. The starting point is always instrumentation: you need to know what is actually flowing through the API before you can reduce it meaningfully.

These two techniques serve different patterns and can be combined. Prompt caching reduces cost on repeated inputs within a session window; it targets same-context, multiple-query patterns where a large shared context is re-sent with each request. The Batch API reduces the cost of any asynchronous request by 50%, regardless of whether the context repeats. A batch job that also caches a shared system prompt gets both discounts at once.

- Haiku 4.5: high-volume, pattern-matching tasks such as classification, extraction, and summarization of structured data. Speed and cost matter more than reasoning depth.

- Sonnet 4.6: most production workloads, including coding, document analysis, and conversational AI. Handles the majority of enterprise use cases at sustainable cost.

- Opus 4.6: tasks where reasoning depth directly affects outcome quality and cost is secondary, such as complex agent tasks, security analysis, and long-horizon reasoning.

Model distillation transfers a larger model's learned behavior to a smaller model for a specific task, delivering Sonnet-level accuracy at Haiku pricing. Available in preview on Amazon Bedrock as of mid-2025. It pays off only for well-defined, high-volume, stable workloads where the task distribution is consistent enough to train on. Outside its training distribution, a distilled model degrades. This is a specialized option for mature, high-scale deployments.

Through an AI gateway layer that centralizes API traffic, assigns virtual keys by team or product, enforces per-team budget limits, and logs token consumption at that level of granularity. Anthropic's Console provides organization-level visibility; team-level attribution requires a gateway. Define budget enforcement rules such as hard blocks, fallback routing, escalation alerts, and audit logs before deployment. Discovering you need these controls after the first budget exceedance means you are already behind.

Evgeniy Altynpara is a CTO and member of the Forbes Councils’ community of tech professionals. He is an expert in software development and technological entrepreneurship and has 10+years of experience in digital transformation consulting in Healthcare, FinTech, Supply Chain and Logistics

Give us your impressions about this article

Give us your impressions about this article