MCP Security: A Practical Guide for Enterprises Deploying AI Agents

27 Mar 2026

16 Min

134 Views

AI agents built on the Model Context Protocol (MCP) can query databases, write to file systems, and trigger automated workflows with a single natural-language instruction. That capability is what makes MCP a meaningful shift in enterprise software. A single misconfigured MCP deployment can expose production data and trigger unauthorized cross-system actions, leaving no recoverable audit trail of what happened.

At Cleveroad, we have built agentic AI systems for enterprise clients in Healthcare and FinTech. Both are industries where a permission error carries legal and financial consequences. We have seen issues surface in real deployments during the transition from pilot to production, especially around access model design, approval flows, or audit instrumentation. This guide covers what we have seen go wrong in MCP deployments and what a secure implementation looks like in practice.

Key takeaways:

- The most critical MCP security risks emerge at the intersection of access control and credential management, as well as auditability

- MCP agents make autonomous decisions about tool sequencing. Traditional API security models were not designed for that threat model

- The MCP protocol includes authentication hooks, while RBAC and consent controls for chained actions must be implemented separately. Enforcement falls on the implementer.

Keep reading this guide for a concrete breakdown of the MCP attack surface, a seven-step implementation sequence, real-world security risks in enterprise deployments, and a self-assessment for organizations already running MCP in production.

MCP Security by Design: What You Get and What You Must Build to Stay Safe

Model Context Protocol (MCP) defines how AI agents connect to external systems and access data. While it establishes the foundation for authentication and communication, a complete enterprise security model requires additional controls implemented at the architecture level. Below, we break down what MCP includes by design and what must be added for safe production use.

MCP provides foundational security primitives such as authentication hooks, including OAuth2 and API keys, along with recommended transport-level protection via TLS. These mechanisms ensure that requests can be authenticated, while control over how agent-driven actions are executed across systems remains outside their scope.

Critical security controls remain outside the protocol scope: Role-Based Access Control (RBAC), session management, runtime scope validation, and consent handling for chained tool calls must be implemented by the integrator. Environments that lack these controls allow agents to execute cross-system actions without explicit approval, creating gaps in traceability and policy enforcement.

On the “must build” side, enterprises need to implement RBAC per tool call, token rotation policies, runtime scope validation, consent handling for multi-step agent actions and immutable audit logging, as well as supply chain governance for MCP servers. The gap between protocol-level capabilities and production-grade security is significant, and in practice, many failures emerge within this boundary.

To clearly separate what MCP provides out of the box and what must be implemented for production-grade security, use the breakdown below:

| What MCP provides | What you must build on top of MCP |

Authentication hooks (OAuth2, API keys) | Role-based access control per tool call |

TLS recommendations for secure transport | Session management and lifecycle control |

Baseline security guidelines in official spec | Runtime scope validation and token rotation |

Standardized interface for tool access | Consent handling for multi-step agent actions |

Immutable audit logging across agent actions | |

Supply chain governance for MCP servers |

Why MCP security is different from regular API security

MCP changes the security model by moving validation of individual requests toward governing agent-driven action chains. This shift requires a different approach to authorization and auditability.

Let’s explore further to see how the MCP security model affects real-world enterprise systems.

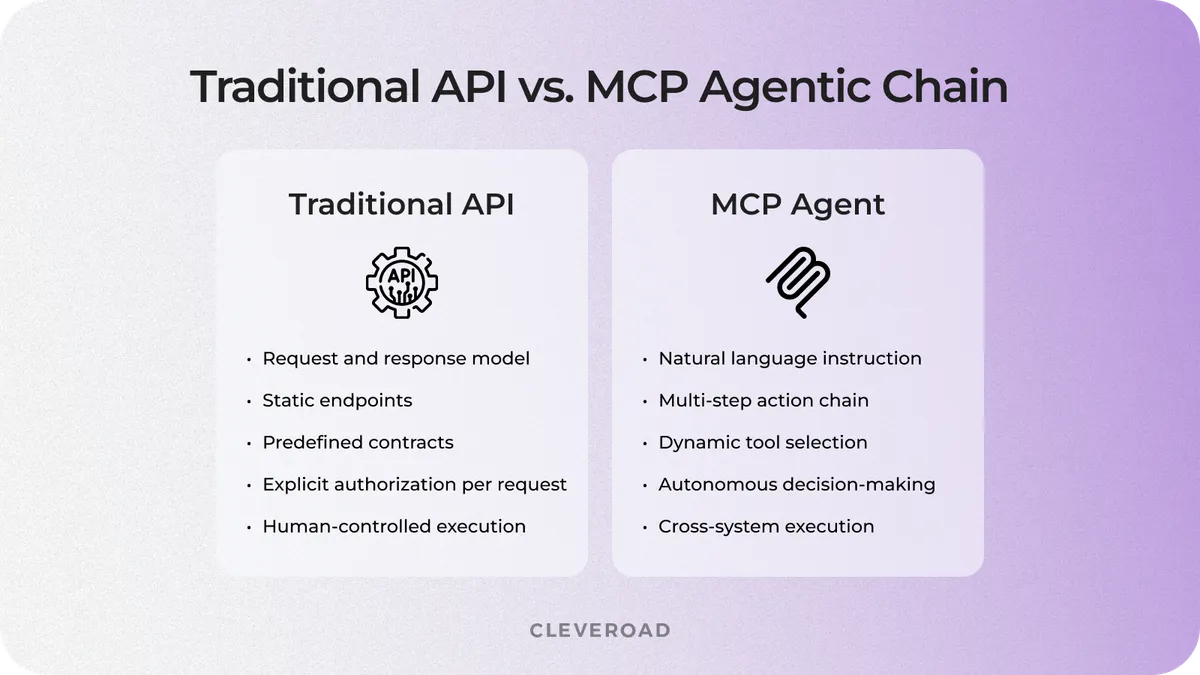

Traditional APIs work on static, predetermined contracts. A client sends a specific request; the server returns a specific response. Authorization is clear: this credential grants access to this endpoint. An MCP client works on an entirely different basis.

An MCP agent receives a natural-language instruction, reasons about which tools to call, determines the sequence of operations, and executes multiple downstream requests without a human explicitly authorizing each step. The security perimeter shifts from "what endpoints exist" to "what decisions is the agent allowed to make."

Anthropic's official MCP introduction describes the protocol as a universal standard for connecting AI assistants to systems where data lives. In practice, organizations often underestimate how quickly this expands the attack surface, especially when agents gain access to multiple systems with different permission models.

The difference becomes clear when comparing how traditional APIs handle requests vs. how MCP agents execute multi-step action chains, as shown in the image below:

Traditional API vs MCP agentic chain

Agent decisions and uncontrolled action chains

When an AI agent has write access to a CRM, a file system, and an email platform simultaneously, it can take consequential actions based on inferred intent. Consider a request like "follow up with the Chicago leads from last week." The agent may first read your CRM records, then compose and send emails, and log the activity in your system.

Each individual action falls within its authorized scope, but the combined effect was never explicitly approved by anyone. In enterprise environments, this pattern can result in unauthorized communication with customers, incorrect data updates across systems, unintended execution of automated workflows, and limited ability to audit how decisions were executed.

The issue lies in how action boundaries are defined. No one defined where the authorized chain of actions was supposed to stop. Traditional authorization models have no concept of an action sequence initiated by a reasoning system. This is where many implementations break down, as approval checkpoints and action limits are often not defined at the sequence level; they only know individual requests. As a result, security controls validate isolated actions instead of governing the full chain of agent decisions.

TOP-4 Attack Vectors Security Teams Need to Plan For

MCP security risks concentrate around four core attack vectors that define how agents are manipulated and how access is abused in production systems. Each vector targets a different layer of the MCP model, including the agent’s reasoning logic and its permission control mechanisms.

Let’s take a closer look at how these attack vectors form and why they matter.

How the MCP threat surface forms

The CoSAI Securing the AI Agent Revolution whitepaper identifies 12 threat categories for agentic AI. In MCP systems, 4 of these vectors account for most incidents. The key difference from traditional API vulnerabilities lies in how attacks enter the system and how agents act on them.

To understand how these risks manifest in real MCP deployments and how to mitigate them, review the breakdown below.

| Attack vector | What happens | Real incident | Mitigation direction |

Prompt injection via tool output | Malicious content embedded in an API response hijacks agent behavior | Anthropic's Claude: prompt injection bypassed internal tool restrictions | Treat all tool outputs as untrusted; validate before context injection |

Tool poisoning or supply chain | A server is modified post-approval to return manipulated outputs | SuperAGI: namespace collision attack exfiltrated data to external domains | Code signing, private registries, immutable tool definitions |

Over-permissioned scope | Agent is granted broad access and exercises it beyond the user's intent | Asana MCP flaw: tenant isolation failure affected 1,000+ enterprises | Least-privilege scoping; fine-grained RBAC per tool call |

Hard-coded credentials | Long-lived API keys stored in config files get compromised | Astrix Security 2025: 53% of 5,000+ MCP servers use static credentials | Short-lived tokens, secrets rotation via vault (AWS Secrets Manager) |

What these incidents reveal about MCP security failures

The Asana flaw is the most instructive, as it was a scope definition error that propagated across tenant boundaries. An agent with overly broad permissions for a given operation could access data belonging to other enterprises. The permission model functioned as configured, but the configuration itself was incorrect.

The SuperAGI namespace collision exposed a similar weakness, where a trusted server was modified after approval, highlighting that deployment-time security reviews are insufficient without supply chain controls such as code signing and continuous validation. Together with hard-coded credentials and prompt injection, these vectors span the full MCP interaction lifecycle and require a security architecture that governs all stages, including familiar API-level risks.

Security architecture decisions made during initial scoping cost a fraction of what retrofit work costs once agents are handling production data. The attack vectors above are well-documented. There is no reason to discover them the hard way.

Where Dynamic Authorization Breaks Down at Enterprise Scale

A single natural-language request can silently touch multiple systems, each with a different permission model: a calendar service, a flights API, a corporate policy database, an expense management system, and a booking service. Consider a travel-booking agent: it checks a calendar, queries a flights API, reads the company travel policy, pulls expense rules, and books a ticket. The MCP agent decides how to sequence those calls. No human reviewed that decision chain before it was executed.

This is the runtime scope negotiation problem. The agent needs permissions it did not declare at registration time, and standard OAuth has no mechanism for clean mid-workflow scope escalation. The result is a forced choice: grant broad permissions at setup, or interrupt the user with repeated re-consent requests at every escalation point. Neither option is right for production systems handling sensitive data.

To address this trade-off, it is important to understand how different authorization models behave in practice and where each one starts to break down.

The coarse scope approach: simple but fragile

Predefined scope tiers (e.g., read, write, admin) work well for simple agents in predictable, tightly controlled workflows. They are fast to implement and easy to reason about. The moment an agent needs to escalate mid-task, coarse scopes leave you with an unpleasant binary: over-grant at setup time or fragment the user experience with consent interruptions. For early-stage MCP pilots with limited data exposure, this setup is an acceptable starting point.

The fragility shows when you try to extend the agent's capabilities without extending the permission model. Organizations that start with coarse permission scopes tend to add new tools at the same level as existing ones. Changing the authorization model after the agent is in use is expensive, so the permissions stay broad long after the specific use case has narrowed.

Fine-grained approach: control vs complexity

OAuth 2.1 Rich Authorization Requests (RAR) encode operation-specific context directly into authorization payloads. The system can verify that this specific user approved this specific action on this specific record. The audit trail is precise, the blast radius of any compromised token is narrow, and your security team has concrete data to work with during an incident investigation.

RAR requires a capable identity provider. Authlete and ForgeRock support it natively. Auth0 and Okta offer partial support through hooks. The implementation effort is higher than with coarse-grained scopes, but for production workloads that handle financial or medical data, the specificity of the authorization record is not a luxury. It is what makes compliance defensible.

Which approach fits your stage

The right choice depends on the sensitivity of the data your agents touch and the cost of a scope error in your environment. Most enterprise deployments layer both coarse scopes for low-risk operations and RAR-based fine-grained control for anything touching regulated or confidential data.

The table below outlines which authorization approach fits each deployment stage and what trade-offs to expect.

| Deployment stage | Recommended approach | Tradeoff |

Early-stage MCP pilot with limited data access | Coarse scope tiers with strong error feedback and logging | Fast to build; tends to over-grant if not reviewed before go-live |

Production deployment handling financial or medical data | OAuth 2.1 with RAR-based fine-grained control | Strong audit trail and narrow blast radius; requires more IDP capability and implementation effort |

Whichever approach you start with, build logging from day one. The ability to reconstruct who authorized each action and when is a common requirement across both tiers and is often missing in real deployments. Designing logging correctly requires both security and architectural expertise.

If you need a tech partner to assess your MCP setup and implement the right controls, contact us to review your architecture and define a secure production-ready model.

How to Build a Secure MCP Architecture: The Implementation Sequence

A secure MCP architecture must be built in a specific order. The sequence below reflects what typically fails when security is treated as a parallel workstream. Steps 1–3 must be in place before any agent makes a production tool call, while steps 4–7 are required before handling real user data.

Step 1: Establish identity and enforce secure authentication

Start with identity. Every MCP server must authenticate before performing any action, with TLS enforced through certificate pinning. Anonymous connections in production introduce a direct security risk. Identity verification should also be consistently enforced across all tools and services the agent interacts with.

Step 2: Implement short-lived, scoped credentials

Use short-lived tokens tied to specific scopes. Long-lived API keys remain one of the most common sources of credential exposure in MCP deployments. Instead, issue just-in-time credentials scoped to each task and rotate them automatically using a secrets manager such as AWS Secrets Manager or HashiCorp Vault.

Step 3: Apply least-privilege access at the tool level

Define least-privilege access at the tool level. For example, a server that searches flights should not have the same permissions as one that books them. Permissions should be scoped per tool. This strategy limits the impact of misconfiguration and reduces the potential blast radius of any compromised access.

Step 4: Validate all runtime inputs and tool outputs

Runtime validation should be established early in the system architecture design. Once a malicious or manipulated tool response enters the agent’s reasoning context, it can influence subsequent actions in ways that are difficult to detect and control.

Treat all tool outputs as untrusted input. Apply strict JSON schema validation before injecting any tool response into the agent’s context. This step helps reduce the risk of prompt injection from external data sources, including scenarios similar to those observed in the Anthropic Claude incident.

Step 5: Isolate MCP servers and execution environments

Run MCP servers in isolated environments. Containers provide a useful baseline, but additional isolation layers such as gVisor, Kata Containers, or SELinux profiles are recommended for production systems handling sensitive data. Local MCP servers on developer machines should remain limited to development use and should not have access to production credentials.

Step 6: Establish observability with full audit logging

Ensure comprehensive logging across all agent activity. Logs should be immutable and include full trace context for every tool call, permission request, scope escalation, and agent decision. OpenTelemetry is emerging as a standard for MCP observability, helping delivery experts gain the visibility needed to investigate incidents and support compliance requirements.

Step 7: Secure the MCP supply chain

Shadow deployments refer to MCP servers introduced outside centralized governance, often without full visibility or approval. This creates a supply chain risk that organizations frequently overlook, especially as agent ecosystems scale.

To manage this risk, implement a centralized inventory of MCP servers and automated detection of unregistered instances. This provides the visibility and control needed to maintain a secure and governed MCP environment.

Establish supply chain controls before deploying any MCP server to production. This includes code signing, private registries with security scanning, and a centralized server inventory. Incidents like the SuperAGI namespace collision highlight the risks of post-deployment modification, making continuous monitoring and validation essential.

Designing and implementing these controls requires both deep security expertise and hands-on experience with real MCP deployments. Many organizations face challenges when moving from prototype to production, where gaps in access control, validation, or observability become critical.

Explore how Cleveroad’s AI development services help you design secure MCP architectures and ensure audit for production systems

What MCP Deployments Get Wrong

Most MCP security guidance is written for companies starting fresh. If your agents are already in production, the self-assessment is a single question: can you answer 'who authorized this action, and when?' for any agent operation in your system? If the answer is no, the risk is real. It directly impacts incident response time and compliance readiness, as well as your ability to maintain customer trust.

Let’s look at three patterns that consistently appear in existing deployments where the answer is no.

Unrotated credentials in production systems

Hard-coded API keys are a standard shortcut during prototyping. They become a liability the moment the prototype graduates to production, which often happens faster than the engineering organization updates the credential model. According to Astrix Security's 2025 research, 53% of live MCP servers still use static credentials. If your keys have not been explicitly rotated since initial development, they have not been rotated at all.

Addressing this issue is relatively straightforward, but it requires updates to live infrastructure, which often leads to delays. It creates ongoing exposure in production environments, which can lead to unauthorized access and slower incident detection. Prioritizing credential rotation helps reduce this risk and strengthens the overall security posture.

Excessive permissions persisting in production

Broad access permissions granted during development rarely get revisited before go-live. An agent that needed write access to a document store during testing to iterate quickly should have read-only access in production, but that review step is often skipped more than delivery specialists acknowledge. The Asana tenant isolation failure was not a sophisticated attack. It was a scope definition that stayed too permissive past the point where tightening it would have been straightforward.

A practical strategy is to run a permission audit before promoting any agent from a development environment to production. Map each tool call to the minimum required permission. Any gap between the granted and required permissions increases the potential blast radius of an incident and weakens governance controls.

Use our AI Strategy Advisor to assess your architecture, identify access control and credential risks, and define a secure path from prototype to production

Incomplete audit trails in production agent systems

Complete logging is essential for understanding how agent-driven systems behave in production. Without full visibility into every tool call and agent decision, it becomes difficult to reconstruct incidents, demonstrate compliance, or detect subtle security issues that may go unnoticed. Audit logging is the control that makes all other controls measurable and enforceable, yet it is often underdeveloped in systems that evolve from prototypes.

To address this issue, implement end-to-end audit logging with full trace context across all agent interactions. Ensure every tool call, permission request, or decision is recorded and stored immutably, being linked to user identity and execution context. Solutions such as OpenTelemetry help standardize observability and provide visibility for incident investigation and compliance reporting.

These issues are resolved at the infrastructure level, where access control models and audit mechanisms are implemented and enforced. In practice, this is where experienced engineering partners like Cleveroad play a critical role, especially when scaling systems in compliance-sensitive environments.

Penneo operates in the digital signature and document verification space, where data-handling standards are non-negotiable. Their engineers collaborated with Cleveroad on the cloud infrastructure layer. That is the same layer where MCP security decisions take effect.

See what Hans Jørgen Skovgaard, CTO at Penneo, says about cooperation with Cleveroad.

Hans J Skovgaard, Penneo A/S. FinTech company, on cooperation with Cleveroad

How Cleveroad Builds Secure Agentic AI Systems

Over the years, Cleveroad has delivered agentic AI systems for clients in Healthcare and FinTech. We would like to share with you one of our recent portfolio cases to show what MCP security looks like when it is designed into the architecture from day one, not added later.

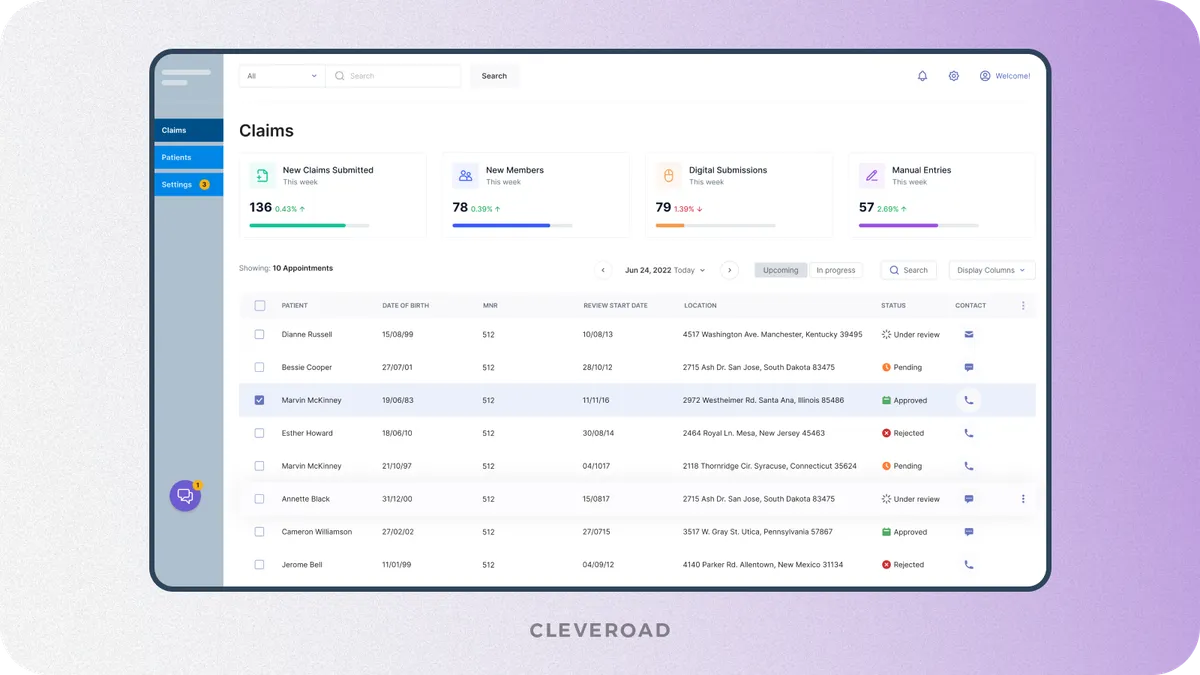

Healthcare agentic AI: automated claims system

Our client needed an AI-driven system to automate health insurance claims processing and reduce reliance on manual clinical document reviews. The goal was to validate treatment codes, ensure policy compliance, and detect anomalies in submitted documentation while working with multiple sensitive data sources such as patient records, insurance policies, and clinical notes.

Cleveroad developed a multi-agent system using an MCP-based architecture, where agents interacted with external systems through controlled tool interfaces. We implemented strict tool-level permissions and real-time audit logging, together with access controls scoped per data category. Each data domain was treated as an independent authorization boundary, ensuring that access to one type of data did not automatically grant access to another while enabling secure end-to-end claims processing.

Healthcare agentic AI system for claims management designed by Cleveroad

As a result, claim resolution time decreased by 60%, reducing average processing from 10 days to 4 days. Fraud detection accuracy improved by 35% through real-time anomaly detection, while automation significantly reduced manual claim review and strengthened compliance through a fully traceable audit trail.

What Cleveroad brings to secure MCP and agentic AI delivery

Cleveroad supports organizations building agentic AI systems where security architecture must be defined upfront. In MCP-based environments, access control models, auditability, as well as compliance requirements are embedded into the system design before agent workflows are implemented.

By collaborating with Cleveroad, you benefit from:

- Proven experience in secure agentic AI services and MCP-based system design: building agentic AI solutions with controlled tool access, least-privilege permission models, together with runtime validation

- Security-first architecture approach: access control and compliance requirements are defined at the scoping stage, reducing the need for rework before production

- End-to-end delivery of agentic AI systems: from MCP architecture design and integration to deployment and post-launch monitoring

- Collaboration with an ISO-certified partner: aligned with ISO 27001 information security and ISO 9001 quality management standards

- Scalable engineering capacity: 280+ in-house engineers across Europe and the US, experienced in delivering secure enterprise systems

If you are planning an agentic AI system or reviewing an MCP deployment, involving security architecture early helps reduce risk and control costs, while ensuring production readiness.

Build secure MCP AI systems with a trusted partner

Our AI experts help you design secure MCP architectures with proper access control, auditability, and compliance for production-ready agentic AI systems

Hard-coded, long-lived credentials combined with over-permissioned scopes. According to Astrix Security's 2025 research, 53% of MCP servers still use static credentials. The Asana tenant isolation flaw demonstrated what over-permissioned scope looks like at enterprise scale: up to 1,000 companies affected by a single misconfiguration. The risk is documented and specific, not theoretical.

Standard OAuth 2.0 is a starting point, not a complete solution for MCP security. MCP agents make dynamic, runtime permission requests that OAuth 2.0's static scope model was not designed to handle. At minimum, implement OAuth 2.1 with PKCE. For production deployments handling financial or medical data, OAuth 2.1 with Rich Authorization Requests (RAR) is required. It encodes operation-specific context and produces the kind of authorization record that makes compliance defensible.

In a traditional API, a human or application sends a predetermined, explicit request to a specific endpoint. In an MCP deployment, an AI agent autonomously decides which tools to call, in what order, with what data, based on a natural language instruction. The attack surface includes the agent's reasoning and decision-making chain, not just the API endpoint. Standard API authorization models have no concept of a sequenced action chain initiated by a reasoning system.

A modified or malicious MCP server returns altered tool outputs designed to manipulate agent behavior: injecting false data, redirecting actions to unauthorized endpoints, or exfiltrating information to external domains. It is structurally similar to a software supply chain attack, operating at the AI tool layer. The SuperAGI namespace collision is a documented real-world example. The server was approved and trusted before the attack occurred.

Yes, and the difference is significant. Local servers run on developer machines that typically have broad access to credentials, SSH keys, and file systems. They are harder to govern, monitor, and audit than remote servers in isolated environments. Local MCP servers should be treated as developer tooling only:

Remote MCP servers in sandboxed environments offer better isolation, reproducibility, and incident response capability Local servers must never hold access to production credentials. Enforce this architecturally, not just as policy.

Evgeniy Altynpara is a CTO and member of the Forbes Councils’ community of tech professionals. He is an expert in software development and technological entrepreneurship and has 10+years of experience in digital transformation consulting in Healthcare, FinTech, Supply Chain and Logistics

Give us your impressions about this article

Give us your impressions about this article