3 Efficient Ways to Supply Your App with a Virtual Assistant

Updated 21 May 2023

12 Min

98994 Views

Talking to artificial intelligence is no longer science fiction. Living in your phone, watch, or TV, it can search the web, plan daily routines, or even control domestic machines on your behalf. The ecosystem and scope of activity of virtual assistants are growing rapidly. A day when we won't be able to live a normal life without them is certainly not far off.

The onrush of technology definitely opens new opportunities for users but it also can be challenging for developers. In the near future, the availability of a voice interface in an app may be considered a par for the course. The applications that don't use this may end up with the short end of the stick. So, if you want to keep up with the times, give careful consideration to smart voice innovations. We are going to inspect some aspects of the mobile app development services supplied with virtual assistants right away and talk a bit about how to create AI assistant.

How to Include a Voice Assistant in An App

There are three ways to make your app understand verbal language and keep up a conversation.

The first method

The first method involves integrating existing voice technologies into your application by means of special APIs and other development tools.

The second method

The second method allows you to build an intelligent assistant with the help of open source services and APIs.

The third method

The third method is to create your own voice assistant from scratch with it's further integration into your application.

Each method is worthy of attention. Note that the big names like Apple or Google reluctantly offer their beloved creations to the third-party developers. On the other hand, using open source tools may not meet your expectations. Also, creating an AI assistant like Siri on your own may become an impossible task.

To clarify all the benefits and risks you are going to encounter, let's consider each approach in details.

The best virtual assistants and their integration in an app

Siri, Google Now, and Cortana are the world-known names. Of course, there are many more mobile assistant apps on the shelves of app stores. You can check out a comprehensive list here. However, we are going to focus on the three technologies mentioned above because, according to the MindMeld research, they are preferred by the majority of users.

Siri

If you ever studied Siri, you certainly noticed that it was unavailable for most of the third-party applications. With iOS 10 release, the situation has changed a lot. At WWDC 2016, it was announced that Siri can be integrated with the apps that work in the following areas:

- Audio and video calls

- Messaging and contacts

- Payments via Siri

- Photos search

- Workout Car booking

To enable the integration, Apple's introduced a special SiriKit that consists of two frameworks. The first one covers the range of tasks to be supported in your app, and the second one advises on a custom visual representation when one of the tasks is performed.

Each of the app types above defines a certain range of tasks which are called intents. The term refers to the users' intentions and, as a result, to the particular scenarios of their behavior.

The impact of properly chosen tech stack on a general project's success is huge. See best web development stacks.

In SiriKit, all the intents have corresponding custom classes with defined properties. The properties accurately describe the task they belong to. For instance, if a user wants to start a workout, the properties may include the type of exercises and the time length of a session. Having received the voice request, the system completes the intent object with the defined characteristics and sends it to the app extension. The last part processes the data and show the correct result at the output.

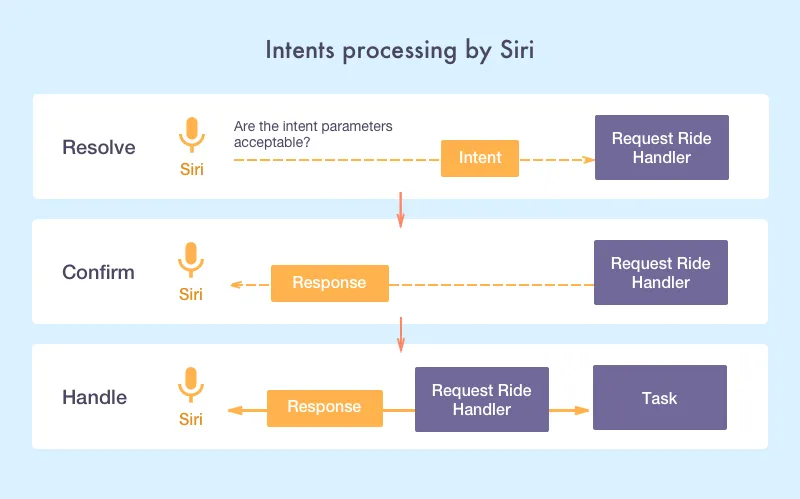

You can find more information about how to work with intents and objects on Apple's official website. Below is a scheme on intents processing:

How Siri processes intents

Google Now and voice actions

Remember the good cop, bad cop technique? Well, Google has always been the first one to show the maximum loyalty to developers. Unlike Apple, Google doesn't have strict requirements for design. The approvement period in the Play Market is much shorter and not as fastidious as in the Apple App Store too.

Nevertheless, in a question of smart assistant integration, it appears quite conservative. For now, Google assistant works with the selected apps only. The list includes such hot names as eBay, Lyft, Airbnb, and others. They are allowed to make their own Now Cards via special API.

Do you know how to make your home smarter? We have some useful information about how to build a smart home system.

The good news is that you still have a chance to create Google Assistant app command for your own app. For that, you need to register the application with Google.

Remember not to confuse Google Now with the voice commands. Now is not just about listening and responding. It is an intelligent creature that can learn, analyze, and conclude. Voice actions are the narrower concept. It works on the basis of speech recognition followed by information search.

Google provides the developers with a step by step guide for integrating such functionality into an app. Voice Actions API teaches how to include a voice mechanism both in mobile and wearable apps.

Create your own google assistant command: What is Google's intelligent mechanism

Cortana

Microsoft encourages developers to use the Cortana voice assistant in their mobile and desktop apps. You can provide the users with an opportunity to set a voice control without directly calling Cortana. In the Cortana Dev Center, it describes how to make a request to a specific application. Basically, it offers three ways to integrate the app name into a voice command:

Prefixal

Prefixal, when the app name stands in front of the speech command, e.g., 'Fitness Time, choose a workout for me!'

Infixal

Infixal, when the app name is placed in the middle of the vocal command, e.g., 'Set a Fitness Time workout for me, please!'

Suffixal

Suffixal, when the app name is put to the end of a command phrase, e.g., 'Adjust some workout in Fitness Time!'

You can activate either the background or foreground app with speech commands through Cortana. The first type is suitable for apps with simple commands that don't require additional instructions, e.g., 'Show the current date and time!'. The second - for the apps that work with more complex commands, like 'Send the Hello message to Ann'. In the last case, besides setting the command, you specify it's parameters:

- What message? - Hello message

- Who should it be sent to? - To Ann.

Independent services to make your own voice assistant

The above technologies are not the only alternative for adjusting voice management in your app. There are numerous tools for developers who are keen on machine thinking. For your reference, I have arranged a list of the most notable ones. Both mobile and web services are included.

Melissa

Melissa is a real finding for the newcomers in development who want to create a custom voice assistant. The whole system consists of many parts. So, in case you want to add or modify a certain feature, you can do it without changing a complete algorithm.

Melissa can speak, take notes, read news, upload pictures, play music, and do many other things. Written in Python, it works on OS X, Windows, and Linux. The web interface is developed with the help of JavaScript.

Jasper

Jasper would be suitable for those who prefer to program the biggest part of artificial intelligence without the external support and create the custom AI assistant relying on themselves. It is also a great tool for the Raspberry Pi fans because it runs on it's Model B.

Jasper is written in Python. It can listen and learn. The first capability is introduced by the active module, the second - by the passive. Always being on, it is ready to perform the tasks at any moment of day or night. Silently studying your habits, it can provide you with the precise information just in time.

So, if you want to build your own voice assistant, you should consider Jasper.

Api.ai

Api.ai covers a wide range of tasks allowing to make your own personal assistant. Along with voice recognition, it also supports converting voice into text followed by the execution of the relevant tasks. Analyzing and drawing conclusions isn't alien for this service either.

Api.ai has both free and paid versions. The last one enables working in a private cloud. So, if privacy is your priority, this is just what you need.

Api.ai provides a wide range of APIs including iOS, Android, Windows Phone, Cordova, Python, Node.js, Unity, C#, and others.

Wit.ai

Wit.ai is similar to Api.ai service. There are two elements to set up in your app if you want to use it - intents and entities. Similar to the Siri system, intent stands for the action that a user wants to perform, e.g., show the weather. Entities clarify the characteristics of a given intent, e.g., the time and place of a user.

One pleasant thing is that you don't need to create the intents on your own. Wit.ai provides the developers with a long list to choose from. Another piece of good news is that it is totally free both for public and private usage. However, to create your own personal assistant with the help of Wit.ai, you should follow it's terms.

As well as Api.ai, Wit.ai introduces a variety of APIs for iOS, Android, Ruby, Python, Windows Phone, C, and Raspberry Pi developers. Frontend developers will be delighted with the fact that it also has a plugin for JavaScript. It's a great solution to create a voice assistant for various platforms.

Services for voice assistant development

How to build your own AI assistant app?

If you intend to to make your own Siri or Google assistant, make sure that you do possess the appropriate skills and sources, because this process is far from simple.

Basic technologies to build a voice assistant

Voice/speech to text (STT)

This is the process of converting speech signal into digital data (e.g., text data). The voice may come as a file or a stream. You can use CMU Sphinx for it's processing.

Text to speech (TTS)

This is the opposite process that translates text / images in a human speech. It is very useful when, for instance, a user wants to hear the correct pronunciation of a foreign word.

What to expect when you make an agreement with a software development company? Find out the difference between Fixed Price and Time & material contract.

Intelligent tagging and decision making

Intelligent tagging and decision making serve for interpreting the user's request. For example, the user may ask: 'What do I watch tonight?'. The technology will tag the top-rated movies and suggest you a few according to your interests. The AlchemyAPI may help you build AI assistant that can cope with this task.

Image recognition

Image recognition is an optional but very useful feature. Later, you can use it for developing multimodal speech recognition. Have a look at OpenCV if you want to create an AI assistant with this feature under the hood.

Noise control

The noises from cars, electrical appliances, other people talking near you make the user's voice unclear. This technology will reduce or totally eliminate the background noise that prevents a correct voice recognition. If you want to build your own personal assistant, this feature can serve as a good addition which will enhance the overall user experience.

Voice Biometrics

This is a very important option security feature which you should take into account to create your own AI assistant. Thanks to this feature, the voice assistant may identify who is talking and whether it is necessary to respond. Thus, you may avoid a comic situation that happened to Siri and Amazon Alexa when they lowered the temperature in a house and even turned off someone's thermostat by hearing a relevant command from the TV speakers.

Speech compression

With this mechanism, the client side of the applications will resize the voice data and send it to the server in a succinct format. It will provide a fast application performance without annoying delays. To implement this mechanism, you can use G.711 standard.

Voice interface

Voice interface is what the user hears and sees in return to his or her request. For the voice part, you will need to pick up the voice itself, set the rate of speech, the manner of speaking, etc. For the visual part, you will have to decide on the visual representation that a user is going to see on the screen. If reasonable, you can skip it at all and make your own AI assistant without these adjustments.

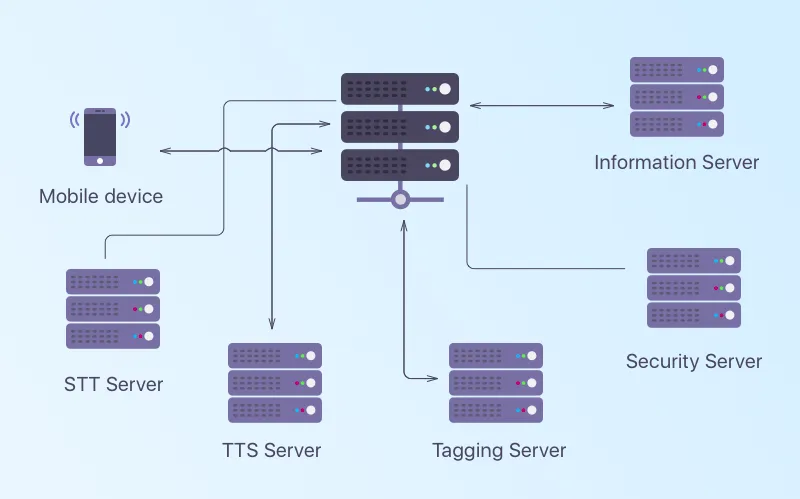

Note that voice and text data may be processed either on a server or directly within a device. In the picture below, we have shown the scheme that works with the server participation.

Mobile voice assistants architecture

Making decisions

As you can see, each approach has it's weak and strong points. Siri, Google Now, and Cortana are well known among users. A lot of people would prefer interacting with a familiar and trusted technology. Yet, these mobile assistants have some limitations as for integration with the third-party apps. Also, they differ in their functionality and run on specific platforms. These factors prevent the flexibility of development.

Alternative solutions greatly simplify the process of implementation. Following their instructions, you can enable voice recognition in your app. However, you might not have the freedom to make significant changes and add extra features.

The main advantage of independent development is that you are free to implement whatever you like. The main con, though, is that it is a very complicated process that will take a lot of time and effort.

If you still hesitate about making a final decision, contact our managers. Depending on the specifics of your project they will recommend an appropriate solution.

There are three ways:

- Integrate existing voice technologies like Siri, Google, Cortana into your app using specific APIs and other dev tools.

- Build a smart assistant using open-source services and APIs like Wit.ai or Jasper.

- Create your own voice assistant from scratch using STT, TTS, intelligent tagging, and so on, and integrate it into your app.

You can build a voice assistant with these technologies:

- Voice/speech to text (STT) like AlchemyAPI

- Text to speech (TTS), OpenCV

- Intelligent tagging and decision making

- Image recognition

- Noise control

- Voice Biometrics

- Speech compression, G.711 standard

- Voice interface

The process is far from simple, so if you have little to no tech background, it's better to delegate software dev services to a tech vendor.

Just like any other app but using specific tools to introduce a voice assistant. Here are the basic steps:

- Sketch your idea.

- Do some market research.

- Pay attention to app's future design.

- Use the right technologies to build the app (or contact your tech partner).

- Market your app to reach the right people.

There's a bunch of tools that'll help you, but the most popular ones are:

- Melissa. Speaks, takes notes, reads news, uploads pictures, plays music, etc.

- Jasper. Listens (introduced by active module) and learns (by passive module). Studies user's habits, and can provide exact information just in time.

- Api.ai. Along with voice recognition, it supports converting voice into text and executes relevant tasks.

- Wit.ai. There are two elements to setup: intents that mean actions (show the weather) and entities that clarify the intent (the time and place of a user).

All the magic happens in the ASR (Automatic Speech Recognition) system of your device. They record the speech, make an acoustic analysis, decode the command, and turn it into the text to use as a command.

Plenty of things. They answer questions, play music, manage timers, control your smart home or office, do basic things like sending emails, making to-do lists, and so on.

Evgeniy Altynpara is a CTO and member of the Forbes Councils’ community of tech professionals. He is an expert in software development and technological entrepreneurship and has 10+years of experience in digital transformation consulting in Healthcare, FinTech, Supply Chain and Logistics

Give us your impressions about this article

Give us your impressions about this article

Comments

8 commentsThanks for lot of details.

Thanks

thank you

This is very nice info, thanks.

Okay the thing is, how do I subscribe this blog I don't get it.

I am beginner to the world of AI and this article gave me a motive to continue in my path,Thank you CLEVEROAD

I want make an app like google Go I Know Python and Which framework suits better

Informational Post! Thanks for Sharing.